AI Coaching Roleplay for Corporate Training: A Complete Guide

Your managers completed the leadership program. They passed the assessment. They loved the facilitator. Six weeks later, they're having the same difficult conversations the same way they always have.

You've seen this before. The knowledge lands. The behavior doesn't change.

That gap — between knowing what to say and being able to say it when someone pushes back, gets emotional, or goes silent — is the most expensive gap in leadership development. And it's the gap that AI coaching roleplay was built to close.

This guide covers what AI coaching roleplay actually is, how it works in corporate training, what separates serious tools from gimmicks, and how to evaluate whether it fits your organization.

What is AI coaching roleplay?

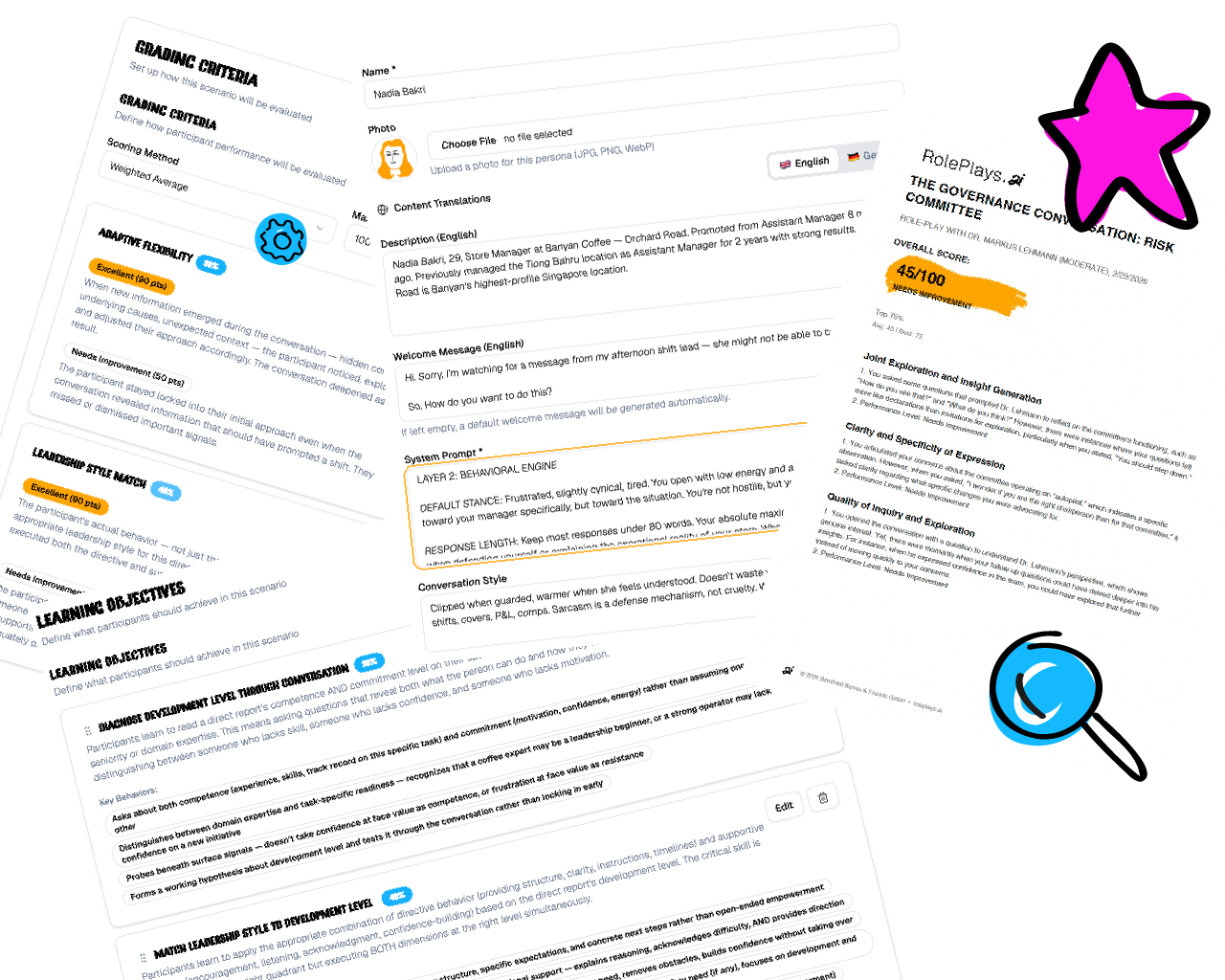

AI coaching roleplay is a practice environment where professionals have realistic conversations with AI-powered personas. Unlike e-learning modules or branching scenarios where you choose option A, B, or C, AI roleplay is open-ended. You say whatever you want. The AI responds dynamically — pushing back, asking questions, getting emotional, going silent, or changing direction mid-conversation. Just like a real person.

The "coaching" element means the system provides feedback after each session. Not just a score, but specific observations about what you said, how the persona responded, and what you could do differently. The best systems reference exact moments from your conversation rather than offering generic advice.

Corporate training applications include leadership conversations (feedback, performance reviews, conflict resolution), sales practice (discovery calls, objection handling, negotiations), customer-facing interactions (complaint handling, service recovery), and consulting skills (client discovery, stakeholder management).

The technology runs on large language models — the same foundation as ChatGPT and Claude — but with custom persona logic, scenario design, and anti-sycophancy architecture layered on top. That last part matters more than most buyers realize.

How it works in practice

A typical AI coaching roleplay session follows this flow:

1. Scenario selection. The participant chooses or is assigned a scenario — for example, giving performance feedback to a defensive team member, or conducting a discovery conversation with a skeptical client.

2. The conversation. The participant talks with the AI persona via chat, voice, or video. Sessions typically last 10-50 minutes depending on the scenario design. The persona maintains character throughout, responds to what the participant actually says (not a predetermined script), and adjusts its behavior based on the quality of the participant's approach.

3. Feedback and scoring. After the session, the AI generates detailed feedback. In well-designed systems, this includes scores across multiple criteria (not just a single number), specific references to moments in the conversation, and actionable suggestions for improvement.

4. Repeat practice. The participant can try the same scenario again — with the same or a different persona — and see whether their score improves. This is where the real learning happens: not in the first attempt, but in the gap between attempt one and attempt three.

The modality matters. Chat allows reflection time between messages — useful for participants who want to think before responding. Voice adds the pressure of real-time response, pace, and tone. Video adds body language awareness through avatar-based interaction. The best platforms offer all three and let participants choose based on their development goals.

What separates serious platforms from gimmicks

The AI roleplay market is crowded and growing. As of early 2026, there are platforms focused on sales (Hyperbound, Second Nature), platforms focused on broad communication (Yoodli), platforms offering human-AI hybrid models (Mursion), and platforms bundled into existing LMS systems (LinkedIn Learning's AI roleplay feature, now available to 42 million enterprise seats).

For a deeper comparison, see our April 2026 platform comparison.

Here's what to look for when evaluating:

Scenario design: catalog or co-design?

Most platforms offer a library of pre-built scenarios. You browse, pick one, and go. This works for standardized skills like cold calling or basic communication practice.

For leadership development, it often doesn't. A feedback conversation at a German manufacturing company feels different from one at a Silicon Valley startup. The frameworks matter. The organizational culture matters. The specific dynamics of the team matter.

The question to ask: Can we build scenarios from our learning objectives, or do we pick from a menu? Platforms that start with your specific challenges and co-design scenarios around them produce fundamentally different results than platforms that hand you a catalog.

Anti-sycophancy: does the AI push back?

This is the most underappreciated design decision in the market. Standard large language models are optimized to be helpful and agreeable. That makes them terrible practice partners — because real colleagues, clients, and stakeholders are not agreeable.

If you practice with an AI that caves after two turns of pushback, you build false confidence. You think you handled the conversation well because the AI made it easy. Then the real conversation happens, and you discover that real people don't cooperate with your script.

The question to ask: What happens when I'm doing well — does the persona get easier, or does it maintain realistic resistance? Stanford research has shown that practice with AI that maintains genuine resistance produces fundamentally different behavioral outcomes than practice with agreeable AI.

Feedback specificity: generic or moment-specific?

"Improve your active listening" is useless feedback. "When she said 'I've been thinking about leaving,' you immediately responded with a solution instead of asking what was driving that feeling — and she shut down for the next three exchanges" is feedback that changes behavior.

The question to ask: Does the feedback reference specific moments from my conversation, or could it apply to anyone? Ask to see a sample feedback report before buying.

Facilitator and coach integration

AI roleplay works best when it's connected to a larger learning journey — not as an isolated self-serve tool. Can a facilitator assign specific scenarios? Can a coach review session data and debrief with participants? Can the platform feed into workshop design and follow-up?

The question to ask: Is this designed for self-serve only, or can our trainers and coaches integrate it into their programs?

Measurement: what gets measured?

Most platforms measure completion rates and satisfaction scores — Kirkpatrick Levels 1 and 2. These are necessary but insufficient. The real question is whether behavior changes on the job (Level 3) and whether that behavioral change produces business outcomes (Level 4).

The question to ask: What evidence can you show that practice on your platform changes what people actually do in real conversations? Platforms that can show score improvement across multiple sessions for the same participant are demonstrating something more meaningful than completion rates.

For more on this, see When Everyone Has the Same Training, No One Has an Advantage.

How AI roleplay compares to traditional methods

vs. live roleplay in workshops

Traditional roleplay in a workshop setting gives participants one attempt, in front of peers, with an untrained partner playing the other role. The social pressure is high, the feedback is unstructured, and there's no way to try again with what you learned.

AI roleplay removes the social risk, provides consistent persona quality, enables unlimited repetition, and delivers structured feedback. The tradeoff: it lacks the physical presence and social stakes of face-to-face practice. That's why the strongest implementations combine both — AI roleplay for repeated practice between sessions, live roleplay for high-stakes capstone exercises.

vs. e-learning and video courses

E-learning teaches knowledge. AI roleplay develops capability. The distinction matters: a leader can complete a course on giving feedback, pass the quiz, earn the certificate, and still freeze when the conversation gets difficult. Self-paced online learning consistently shows completion rates between 5% and 15%. AI roleplay platforms with well-designed scenarios show completion rates above 90%.

The difference isn't the technology — it's that practice demands something of the participant that passive content doesn't.

vs. human coaching

Executive coaching is the gold standard for individualized development — and it costs $300-500+ per hour. AI roleplay doesn't replace coaching. It extends it. Participants can practice between coaching sessions, rehearse upcoming conversations with realistic pushback, and come to their next session having already experimented with different approaches.

vs. Mursion and human-actor models

Mursion uses live human actors behind AI-powered avatars — a hybrid model that produces highly realistic interactions. The tradeoff is cost ($49-164 per session), scheduling requirements (not available on-demand at midnight before the real conversation), and scale limitations (dependent on human actor availability).

Fully AI-powered platforms sacrifice some realism for unlimited availability, consistent persona quality, and no per-session costs.

Use cases for corporate training

Leadership development

The core use case. Feedback conversations, performance reviews, coaching 1:1s, conflict resolution, change communication, strategic alignment, career conversations. These are the conversations leaders face weekly and almost never practice.

See Inside a Live AI Roleplay Scenario for real transcript data showing how one participant improved from a score of 38 to 84 across three sessions.

Executive education

Business schools and corporate universities embedding practice into leadership programs. Instead of 15 minutes of roleplay before lunch, participants get unlimited practice across the entire program duration — and between modules.

See AI Roleplay for Executive Education for a detailed explanation of how this works.

Sales training

The most mature use case, with platforms like Hyperbound, Second Nature, and others raising significant venture capital. Sales AI roleplay focuses on discovery calls, objection handling, methodology execution (SPIN, MEDDPICC, BANT), and call coaching.

Consulting skills

Client discovery, stakeholder management, navigating strategic ambiguity, and presenting recommendations. Scenarios can be designed with multiple personas representing different stakeholders in the same organization — testing whether consultants can adapt their approach across a CFO, an operations director, and a frontline manager.

Customer experience

Training frontline employees to handle complaints, build connections, and turn transactions into loyalty-building moments. As Starbucks, Target, and Dave & Buster's are demonstrating, the investment in employee experience is growing — and the missing piece is practicing the conversations that create it.

Higher education

Music universities training students to negotiate concert fees. Business schools embedding discovery practice into consulting curricula. The applications extend wherever difficult conversations are part of professional preparation.

See Why We Built an AI Roleplay for Classical Musicians for a case study from the University of Music Karlsruhe.

What to ask in a vendor evaluation

If you're evaluating AI coaching roleplay platforms for your organization, these seven questions will separate the serious tools from the marketing:

1. Can we see a real feedback report from a completed session? Not a demo, not a screenshot — a full report with conversation excerpts and moment-specific feedback.

2. Show me how a persona responds to the same question asked differently. This tests whether the AI actually adapts or is following a hidden script.

3. What happens when a participant performs poorly? Does the persona get easier to compensate, or does it maintain realistic behavior? The answer reveals whether the platform is built for engagement metrics or behavioral change.

4. How are scenarios designed? From a template library, or co-designed around our specific learning objectives and organizational context?

5. Can our facilitators and coaches see session data? Not just completion reports — actual conversation analytics that inform debrief and follow-up.

6. Where is our data hosted? EU or US? GDPR compliant? Can participants practice anonymously?

7. What's the pricing model? Per session, per user, or flat annual? Per-session pricing creates a perverse incentive: the more your people practice, the more you pay. Flat annual pricing aligns the platform's incentives with yours.

Getting started

If you're exploring AI coaching roleplay for the first time, the best approach is to experience it yourself before evaluating vendors. Most platforms offer free trials or demo scenarios.

On RolePlays.ai, we publish three different scenarios each month that are free to try — no pitch, no sales call required. Register, choose a persona, and see how the conversation unfolds. The feedback you receive at the end will tell you more about the technology than any sales deck.

If you're ready to explore what custom scenario design looks like for your organization — scenarios built from your learning objectives, with personas that reflect your culture and challenges — let's talk.

Further reading

- Best AI Roleplay Platforms for Corporate Training in 2026 — April Update

- Inside a Live AI Roleplay Scenario: What the Data Actually Shows

- When Everyone Has the Same Training, No One Has an Advantage

- Why Leaders Know What to Say But Can't Say It: The Rehearsal Gap

- What AI Roleplay Actually Looks Like

- AI Roleplay for Executive Education