Stop Measuring Training. Start Designing for Behavior Change.

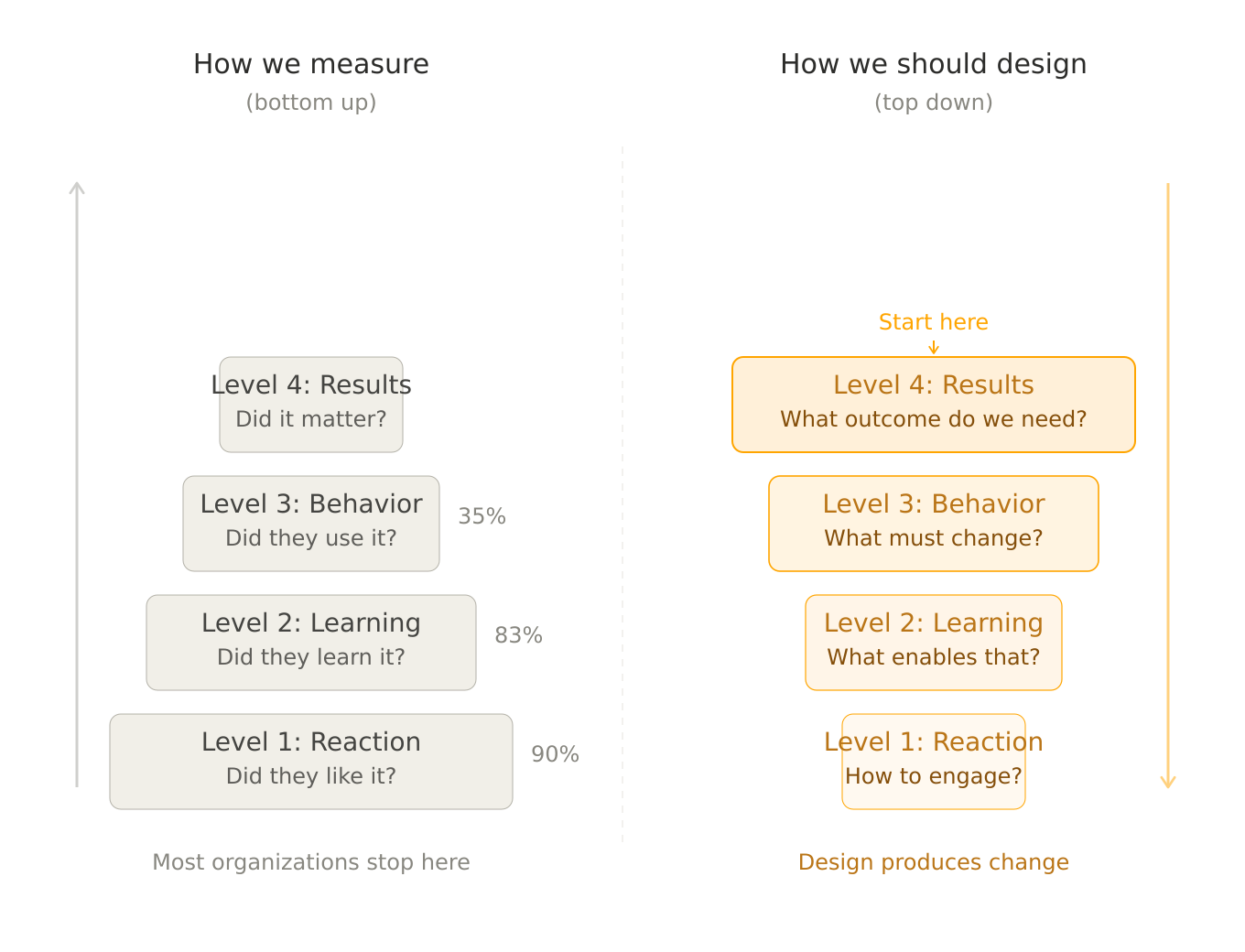

Donald Kirkpatrick published his four-level evaluation model in 1959. Sixty-seven years later, the corporate learning industry still uses it the same way: as a report card applied after the fact.

Level 1 — did they like it? Level 2 — did they learn something? Level 3 — did their behavior change? Level 4 — did it produce business results?

According to ATD research, 90% of organizations measure Level 1. 83% measure Level 2. But only 35% consistently evaluate Level 3 or Level 4.

This means the vast majority of corporate training is designed to produce satisfaction and knowledge — and then hopes that behavior change and business results happen on their own. They usually don't.

The problem isn't Kirkpatrick's model. The problem is that we use it backwards. We design training, deliver it, and then measure what happened. What if we started from the other end?

Kirkpatrick as a design methodology

The idea is simple: instead of evaluating from Level 1 up, design from Level 4 down.

Start with Level 4: What business outcome do you need? Not "better leaders" — something specific. Reduced turnover in a division. Faster decision-making in cross-functional teams. Higher conversion rates in client presentations. Fewer compliance incidents in a regulated environment.

Then Level 3: What behavior change would produce that outcome? If the business outcome is reduced turnover, the behavioral change might be: managers have honest career conversations instead of avoiding them. If the outcome is fewer compliance incidents, the behavioral change might be: speakers handle hostile Q&A without going off-script or making unsupported claims.

Then Level 2: What knowledge and skills enable that behavior? This is where frameworks, models, and content live. But notice — the content is now selected because it supports a specific behavioral change, not because it's interesting or comprehensive.

Then Level 1: What experience makes people want to engage? Design for engagement last, not first. A training that scores 9/10 on satisfaction but produces no behavioral change has failed. A training that scores 7/10 on satisfaction but measurably changes how people show up on Monday morning has succeeded.

This inversion changes everything about how you build training. It changes what you teach, how you teach it, and — critically — what you practice.

What this looks like in practice: two examples

Example 1: Pharma speaker preparation

A pharmaceutical company needs doctors to present the science behind a new medication at peer conferences. The doctors are brilliant researchers. Their presentations are detailed, accurate, and thorough.

The problem is the Q&A.

When colleagues challenge the methodology, cite contradicting studies, or ask about edge cases the data doesn't cover, the speakers struggle. Some get defensive. Some make claims that go beyond the approved data. Some freeze. The business risk isn't embarrassment — it's compliance exposure and credibility loss in a highly regulated environment.

Level 4 outcome: Speakers represent the science accurately under pressure, with zero off-label statements and maintained credibility among peers.

Level 3 behavior: When challenged with a contradicting study, the speaker acknowledges the data, explains the differences in methodology or population, and stays within the boundaries of what their research supports — without becoming defensive or dismissive.

Level 2 knowledge: Speakers understand the competitive landscape of studies, know the limitations of their own data, and have frameworks for responding to different types of challenges (methodological, statistical, clinical).

Level 1 experience: Speakers feel prepared and confident, not lectured at.

Now — what's the training? Not another slide deck about the data. They already know the data. The training is practice: a simulated Q&A where AI personas play skeptical colleagues who cite real contradicting studies, challenge the methodology, and press on weak points. The speakers practice staying composed, staying on-label, and responding with scientific credibility rather than defensive bluster.

The scenario is designed backwards from the Level 4 outcome. Every persona question, every escalation, every moment of pressure exists because it maps to a specific behavioral change that produces the business result.

Example 2: Leadership feedback conversations

A company is losing high performers. Exit interviews reveal a consistent pattern: people don't leave because of compensation. They leave because their manager never had an honest conversation with them about their development, their frustrations, or their future.

Level 4 outcome: Reduce voluntary turnover among top-quartile performers by 20% within 12 months.

Level 3 behavior: Managers initiate career conversations quarterly — not as HR-mandated check-ins, but as genuine dialogue. They ask before they tell. They listen to frustration without immediately solving it. They make commitments they follow through on.

Level 2 knowledge: Managers understand career conversation frameworks, active listening techniques, and the difference between coaching and directing.

Level 1 experience: Managers feel the training is relevant to their actual challenges, not generic.

Again — what's the training? Not a workshop on "how to have career conversations." The managers already know they should have these conversations. The problem is that they avoid them, or have them badly, or default to reassurance instead of honesty.

The training is practice: AI personas who play high performers showing early signs of disengagement. One is frustrated but won't say it directly. Another has an offer from a competitor but hasn't mentioned it yet. A third has been passed over for a project and is questioning whether they're valued.

Each persona is designed to test whether the manager can read the signals, create space for honesty, and respond to what's actually happening — not what the manager assumes is happening. The scenario is scored on whether the manager uncovers the real issue, not whether they deliver a polished speech.

Why most training doesn't produce Level 3

The reason is structural, not motivational. Most training programs are designed Level 1-up:

Start with engaging content (Level 1). Add a knowledge framework and assessment (Level 2). Hope that participants apply what they learned (Level 3). Retrospectively try to attribute business results to the training (Level 4).

The hope between Level 2 and Level 3 is where most learning investment goes to die. Participants leave the workshop knowing what to do. They return to their environment where the pressures, habits, and incentives are exactly as they were before. Without practice — repeated, realistic, feedback-driven practice — the knowledge decays and the behavior doesn't change.

This is what the research consistently shows. A meta-analysis by Fu & Li (2025) covering 12 studies and 907 participants found an effect size of 0.82 for roleplay-based methods compared to traditional instruction — a large effect by any standard. The mechanism is clear: practice bridges the gap between knowing and doing. Without it, Level 2 doesn't become Level 3.

What this means for AI roleplay

AI roleplay is not the only way to practice. But it's the first method that makes repeated, realistic, feedback-driven practice scalable. Before AI, the options were: live roleplay in workshops (one attempt, limited time, untrained partners), coaching (expensive, scheduling-dependent), or on-the-job learning (real consequences, no feedback loop).

AI roleplay removes the constraints. A manager can practice the same career conversation five times in a week. A pharma speaker can face twenty different challenging questions before the real conference. A consultant can test their discovery approach with three different stakeholder personas in a single afternoon.

But only if the scenario is designed from Level 4 backwards.

A scenario pulled from a generic library and deployed to "check the AI roleplay box" is Level 1 thinking applied to new technology. It will produce the same result as the workshop it replaced: satisfaction without behavior change.

A scenario designed from a specific business outcome, with personas that test specific behavioral changes, scored against criteria that map to Level 3 — that's something different. That's training designed to produce what the organization actually needs.

How to start

If you're designing a leadership development initiative, a speaker training program, or any corporate training where behavior change matters more than knowledge transfer, try this before building anything:

Write down the Level 4 outcome in one sentence. Not "better leaders." Something measurable, something specific, something your CEO would recognize as a business result.

Then ask: what would people need to do differently to produce that result? That's your Level 3.

Then ask: what would they need to know to do that? That's your Level 2.

Then — and only then — ask: how do we make this experience engaging enough that they actually show up and stay? That's your Level 1.

If you design in that order, you'll build training that produces change. If you design in the reverse order — which is what most of the industry does — you'll build training that produces applause.

If you want to explore what Level 4-backwards design looks like for your organization — with AI roleplay scenarios built from your specific business outcomes — let's talk.

References

Kirkpatrick, D. L. (1959). Techniques for evaluating training programs. Journal of the American Society of Training Directors, 13, 21-26.

Fu, X., & Li, Q. (2025). Effectiveness of role-play method: A meta-analysis. International Journal of Instruction, 18(1), 309-324.

ATD (Association for Talent Development). Training evaluation practices research.